AI in Business: Why 42% of Projects Fail in Enterprises

AI in business often fails due to poor data, weak planning, and low adoption. Understand why 42% of projects fail and how enterprises can improve outcomes.

I’ve seen how most AI projects actually begin inside companies.

A leadership team gets excited about artificial intelligence in business. They hire a vendor or spin up an internal team, often comparing different AI development companies to get started.

Everyone is enthusiastic. Six months later, the pilot is "almost ready." Twelve months in, quietly, the project is put on hold. No announcement. No clear reason was shared. Just a budget line that disappears from next year's plan.

This is not rare. This is common. And after two decades of working in AI development and digital transformation, I have watched it happen across industries, manufacturing, fintech, healthcare, and retail. The technology is not the problem. The approach is.

The Scale of the Problem Nobody Talks About

Most enterprise leaders assume others are getting AI right while they are the ones struggling. That is not true. The struggle is widespread.

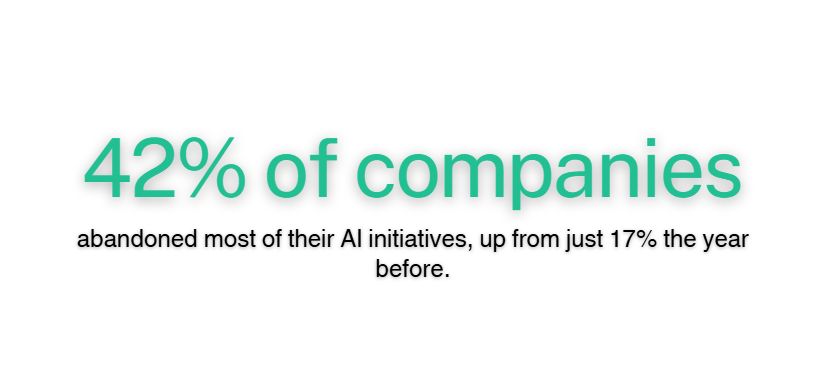

42% of companies abandoned most of their AI initiatives, up from just 17% the year before.

S&P Global Market Intelligence, survey of 1,000+ enterprises.

That near-tripling in one year is not a blip. It reflects a wave of projects that were greenlit without the right foundations in place. The average organisation scrapped 46% of AI proofs-of-concept before they ever reached production. Cost pressures, data privacy concerns, and security risks were cited as the top obstacles.

Think about what that means in practice. A company spends six to twelve months building something. Half of those builds never see a single real user. For a mid-sized enterprise, that can mean hundreds of thousands of dollars in wasted engineering time, vendor fees, and internal resources. For larger organisations, it runs into the millions.

Why Artificial Intelligence in Business Is Harder Than It Looks

When companies evaluate AI solutions, they often compare them to implementing a new SaaS tool. You choose a platform, configure it, train your team, and go live. Artificial intelligence in business does not work that way.

The failure patterns I see repeat themselves. They are not random. They follow a predictable shape.

1. The pilot trap

Teams build a proof-of-concept in a controlled environment. It works beautifully in the sandbox. Then comes the moment of real deployment, integration with legacy systems, compliance workflows, actual user behaviour, and everything invisible before becomes a wall. The model did not fail. The path from pilot to production was never designed.

2. Clean data is rarer than people admit

I have never walked into a large organisation and found their data in perfect shape. Research from Quest's 2024 State of Data Intelligence Report found that 37% of organisations cited data quality as their top obstacle to strategic data use, followed by data silos at 24%. For this reader, that means: if your data is messy now, your AI will be messy too. The model amplifies what it is fed, not what you wish you had.

3. People are the missing variable

Most AI failure analyses focus on the technical: infrastructure gaps, model limitations, and API issues. But the deeper failure is human. Between 2021 and 2023, the percentage of employees who feel concerned rather than excited about AI grew from 37% to 52%.

When the people who are supposed to use a system do not trust it, adoption fails quietly, not loudly. The model keeps running. Nobody uses it. The project dies from neglect, not malfunction.

4. Optimising for the wrong thing

Engineering teams love optimising models. Improving accuracy from 89% to 94% feels like real progress. But while that work is happening, no one is solving the harder problem: how does this connect to a business outcome that a CFO would recognise? McKinsey's 2024 State of AI survey found that while 78% of organisations now use AI in at least one business function, only 17% report that 5% or more of their EBIT comes from generative AI use. Adoption is easy. Value is hard.

The Failure Pattern vs. the Success Pattern: A Direct Comparison

After working across dozens of deployments, I can tell you the difference between projects that reach production and those that get abandoned. It is rarely about the model.

|

Factor |

Projects That Fail |

Projects That Succeed |

|

Starting point |

Technology-first ("we should use AI for this") |

Problem-first ("this costs us X, can AI fix it?") |

|

Data readiness |

Assumed to be fine; found to be broken at launch |

Audited and cleaned before model work begins |

|

Stakeholders |

IT - led, business teams consulted late |

Business owners define success from day one |

|

Pilot design |

Sandboxed, no path to production planned |

Pilot mirrors real-world constraints from the start |

|

Human adoption |

End users trained after build, low trust |

Users involved in design; trust built early |

|

Success metric |

Model accuracy scores |

Revenue impact, cost reduction, time saved |

|

Post-launch |

Handed off and forgotten |

Treated as a live product with ongoing iteration |

The pattern on the right is not complex. But it requires discipline and an honest conversation about readiness before any model is built.

What AI Development Companies Need to Tell Clients More Often

I will be direct about something that sits uncomfortably in our industry. Many AI development companies, including firms that compete with ours, lead with demos.

A slick prototype, a well-presented use case, a compelling ROI projection. What they do not lead with is an honest assessment of client readiness.

That is where the damage starts. A client who is not ready for production AI gets sold as a pilot. The pilot works. The production build does not. The relationship sours. And the client walks away believing artificial intelligence in business does not work, when the truth is that the process did not work.

"The model rarely breaks. It is the invisible infrastructure around it that buckles under real-world pressure."

That line from an industry analysis I read recently describes every troubled deployment I have seen. The model was fine. The data pipelines were not. The change management was not. The governance was not.

Gartner predicts that over 40% of agentic AI projects will be cancelled by 2027 due to escalating costs, unclear business value, or inadequate risk controls. The cost of each failed project is not just financial. It makes the next budget conversation harder. It builds internal scepticism that takes years to reverse.

Before You Build AI, Do This First

If you are planning an AI initiative or trying to recover one that has stalled, start with an honest data audit before you talk to any vendor, including us.

Ask your team three questions. Where does the data live that this AI would need? Is it clean, labelled, and accessible in a usable format? And does your infrastructure support the outputs this system will produce? If any of those answers are uncertain, that is your starting point. Not the model selection. Not comparing different AI development companies. The data.

Every successful AI deployment I have been part of, and there have been many, started with someone brave enough to say: We are not ready yet. That honesty is what made them ready later.