4 Core Pillars of AI Center of Excellence for Businesses

The 4 core pillars of the AI Center of Excellence for businesses cover strategy, data, talent, and governance for structured and measurable AI adoption.

Most companies I’ve worked with don’t fail at AI because of bad technology.

They fail because they run multiple pilots, get mixed results, and have no clarity on what to scale. Each team builds its own workflow. Nobody shares what works. And when leadership asks, “What’s our return on AI?”, there’s no clear answer.

If that sounds familiar, you’re not alone and it’s not a technology problem.

What’s missing is an AI Center of Excellence (AI CoE), a structured framework that connects AI experiments to real business outcomes, governs how AI is used, and enables the organization to scale what actually works.

I’ve helped set this up for businesses across industries. Here’s what actually matters.

Why Most AI Efforts Stall Without an AI CoE Framework

Before we get into the pillars, let's be honest about what's happening in most organizations.

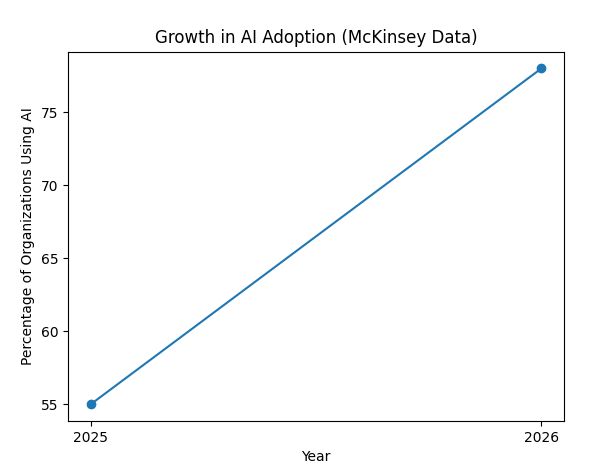

78% of organizations now use AI in at least one business function, up sharply from 55% a year ago, according to McKinsey.

More than 80% of organizations aren't seeing tangible enterprise-level EBIT impact from their AI investments, according to McKinsey. That gap, between widespread usage and measurable business returns, is exactly the problem a CoE is built to close.

The pattern I see repeatedly, a finance team runs a forecasting model, the ops team builds a chatbot, and marketing experiments with content generation. Three separate initiatives, three separate learnings, three separate failures, none of which benefit the others. This is what fragmentation costs you.

An AI CoE framework stops that waste. Here's how it's built.

Pillar 1: AI Strategy and Use - Case Governance

The first thing an AI CoE does is say "no" more than it says "yes."

That may sound confusing. But I've seen companies approve 30 AI initiatives in a year and ship exactly two that worked. The others consumed budget, distracted teams, and quietly died.

Use case governance means every AI initiative gets evaluated against a common set of criteria: business impact, data availability, technical feasibility, and risk. Not every idea deserves a pilot. Not every pilot deserves to scale.

The governance layer also decides where AI belongs in your business. Not every process benefits from it. AI Center of Excellence strategy identifies the two or three areas, usually operations, customer experience, or revenue generation, where AI can drive measurable impact in the next 12 months, and focuses resources there.

One client of mine spent their first year building AI tools across 11 departments simultaneously.

When we came in, we ran a three-week audit and found that 80% of the value sat in two areas: demand forecasting and customer support deflection.

We stopped everything else. Within six months, they had results they could actually report.

Without a governance layer, AI becomes a cost center dressed up as innovation.

Pillar 2: The Data Foundation Your AI CoE Framework Depends On

AI is only as good as the data it runs on. I know that sounds obvious. Yet this is where I see the most expensive mistakes.

Most organizations that come to us have three problems: data is siloed, data quality is inconsistent, and nobody owns data governance. You can buy the best AI platform in the world, and it will still produce unreliable outputs if the training data is a mess.

A strong AI CoE framework builds the data infrastructure that everything else runs on. This includes a clear data ownership model, data quality pipelines that catch and flag problems before they reach a model, and a centralized data catalog so teams can find what exists without reinventing datasets.

This pillar takes the longest to build and gets the least attention in strategy decks. Invest in it anyway. Everything downstream depends on it.

Pillar 3: Building AI Talent and Culture

Here's something nobody wants to say out loud: you can't outsource your way to an AI-capable organization.

I've seen companies bring in vendors, deploy tools, and then find that nobody inside the business knows how to use them responsibly or maintain them over time. Vendor dependency is not an AI strategy.

A CoE builds internal capability deliberately. This doesn't mean hiring 50 data scientists. It means identifying the right roles, AI strategists, ML engineers, and domain experts who understand the business and building a continuous learning function around them.

The CoEs that work treat internal training as a first-class initiative, not an afterthought. They run workshops. They create internal communities of practice. They celebrate people who use AI to do their jobs better and make those stories visible.

A Quick Comparison: Fragmented AI vs. CoE-Structured AI

|

Area |

Fragmented AI |

CoE-Driven AI |

|

Use-case selection |

Random, based on ideas |

Planned, based on business goals |

|

Data management |

Data is separate and messy |

Data is in one place and well managed |

|

Teams |

Unorganized, dependent on vendors |

Structured teams with internal skills |

|

Results tracking |

No clear results |

Measured with clear KPIs |

|

Risk management |

Problems handled later |

Risks managed early with rules |

The difference isn't marginal. It's the gap between AI as a science project and AI as a business function.

Pillar 4: Governance and Risk - Where the AI CoE Framework Holds Everything Together

This is the pillar that most companies skip until something goes wrong.

AI governance is not about slowing things down. It's about making sure you can defend what you've built to your board, your regulators, and your customers.

-

Bias audits. If an AI model is making decisions about customers, credit, or hiring, you need regular checks to ensure it isn't systematically disadvantaging certain groups. These need to be documented and repeatable.

-

Model explainability. If a model flags a transaction as fraudulent or denies a loan application, someone in your organization needs to be able to explain why. "The model said so" is not a compliant answer in most regulated industries.

-

Incident response. What happens when an AI system produces an output that causes harm to a customer, to your brand, or to a third party? Most companies don't have a protocol. They should.

What a Real AI Center of Excellence Strategy Looks Like in Practice

Let me give you a concrete picture.

A mid-sized logistics company we worked with had AI tools running in dispatch, customer communication, and fleet maintenance, all built independently, none connected. When something failed in dispatch, nobody in fleet maintenance knew. Data wasn't shared. Models weren't benchmarked against each other.

We built a CoE in two phases. Phase one (90 days) establish governance, appoint an AI lead, map existing initiatives, and audit data quality. Phase two (months 4–12): consolidate data infrastructure, retire two redundant tools, retrain three teams, and establish monthly performance reviews with the leadership team.

By month 14, they had a single view of AI performance across the business. Decision-making on new AI initiatives dropped from six months to six weeks. That's what structure does.

Simple Step to Begin Your AI Strategy Today

You don't need a 50-person CoE to start. Most of the businesses I've worked with started with one honest meeting.

Get the key stakeholders in a room, or on a call, and answer three questions: What AI initiatives are currently running in our business? Who owns the results? What does success look like?

If you can't answer all three, you don't have a strategy. You have a collection of experiments. And experiments without ownership don't scale.

If you want to build an AI Center of Excellence that actually moves your business forward, Rubixe can help you design the right framework for your stage, your industry, and your team.